Ironback vs LLMWise

Side-by-side comparison to help you choose the right product.

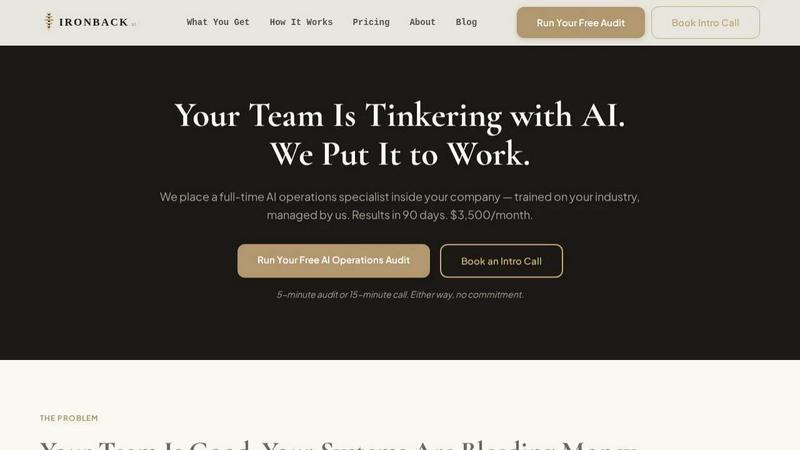

Ironback

Transform your business with Ironback's dedicated AI ops specialist to streamline processes and boost efficiency for just $3,500 a month.

Last updated: April 4, 2026

Access GPT, Claude, Gemini and more with one API that auto-routes for the best model, paying only for what you use.

Last updated: February 26, 2026

Visual Comparison

Ironback

LLMWise

Feature Comparison

Ironback

Full-Time AI Operations Specialist

Ironback places a dedicated AI operations specialist within your company, fully trained on your unique processes and industry specifics. This specialist integrates seamlessly into your team, providing ongoing support and expertise tailored to your operational needs.

Automated Call Handling

With AI voice agents managing after-hours calls, no customer call goes unanswered. Missed calls are swiftly texted back, and emergency dispatch automation ensures urgent jobs are triaged and assigned promptly, allowing your team to respond effectively.

Streamlined Estimating and Quoting

Utilizing AI-assisted takeoffs, Ironback reduces estimating time by 50-70%. This innovative approach replaces traditional methods with photo-based workflows, ensuring accuracy and speed while significantly cutting down on manual labor.

Compliance and Documentation Automation

Ironback simplifies compliance management through digital job forms that replace cumbersome paper documentation. Inspection reports auto-populate, and all necessary compliance paperwork, including OSHA and EPA requirements, is processed efficiently, reducing administrative burdens.

LLMWise

Smart Routing

Smart routing is an innovative feature that automatically directs prompts to the optimal model based on the task at hand. Whether it is coding, creative writing, or translation, LLMWise intelligently selects the best-suited AI model, ensuring users receive high-quality responses tailored to their needs.

Compare & Blend

The compare and blend feature allows users to run prompts across multiple models simultaneously. This side-by-side comparison enables developers to evaluate the strengths and weaknesses of each model. The blend functionality combines the best outputs from different models into a single, more robust response, enhancing the overall quality of generated content.

Always Resilient

LLMWise is built with resilience in mind. Its circuit-breaker failover mechanism reroutes requests to backup models in case a primary provider goes down. This ensures that applications remain operational and do not experience downtime, providing users with uninterrupted access to AI capabilities.

Test & Optimize

With built-in benchmarking suites and batch testing capabilities, developers can run optimization policies focused on speed, cost, or reliability. Automated regression checks ensure that new updates do not compromise performance, allowing teams to continuously improve their applications using LLMWise.

Use Cases

Ironback

Enhancing Customer Engagement

With automated follow-ups and review requests, Ironback helps maintain customer relationships long after a job is completed. This proactive communication builds loyalty and encourages repeat business.

Reducing Operational Costs

By automating manual tasks, service companies can save up to $200,000 annually. Ironback's AI specialist handles multiple operational areas, eliminating the need for hiring additional staff or investing in underused software.

Improving Job Dispatch Efficiency

After-hours calls are no longer a bottleneck. Ironback's AI voice agents ensure that emergency jobs are dispatched promptly, allowing for better resource allocation and customer satisfaction.

Optimizing Resource Management

By harnessing AI for estimating and scheduling, companies can reallocate their human resources to more strategic tasks. This optimization leads to increased productivity and better job completion rates.

LLMWise

Application Development

Developers can utilize LLMWise to streamline the development process by accessing multiple AI models for various functions. From generating code snippets to providing customer support responses, the flexibility allows teams to enhance productivity and quality.

Content Creation

Content creators can leverage LLMWise to compare and blend outputs from different models for writing articles, blogs, or marketing copy. This enhances creativity and ensures that the best ideas are synthesized into compelling narratives, saving time and effort.

Language Translation

For businesses operating in multiple languages, LLMWise can be used to translate content efficiently. By routing translation requests to the most suitable model, users ensure high-quality translations that maintain the original message's intent and tone.

AI Research

Researchers in the AI field can utilize LLMWise to test various models against specific datasets. By comparing model outputs, they can gain insights into performance, capabilities, and potential areas for improvement in AI technologies.

Overview

About Ironback

Ironback is a revolutionary AI operations solution designed specifically for service companies that want to enhance their operational efficiency and reduce costs. By embedding a full-time AI operations specialist into your organization, Ironback takes on critical tasks such as call handling, estimating, scheduling, and compliance management. This innovative approach not only guarantees significant savings—over $50,000 in just a two-week assessment—but also solves the persistent issues of process inefficiencies that plague many service businesses. Ironback is for companies that recognize the limitations of traditional hiring and software solutions. With a dedicated AI specialist trained specifically for your industry, Ironback ensures that your team can focus on what they do best while automating time-consuming tasks, resulting in tangible improvements within just 90 days.

About LLMWise

LLMWise is a powerful API solution designed to streamline access to multiple large language models (LLMs) from leading AI providers including OpenAI, Anthropic, Google, Meta, xAI, and DeepSeek. By integrating these models, LLMWise enables developers to optimize their applications by selecting the most suitable AI model for each task. The primary value proposition is to eliminate the hassle of managing multiple AI subscriptions and APIs, providing a single, efficient API gateway. LLMWise features intelligent routing to match prompts with the best-suited model, ensuring high-quality outputs for various applications. This service is tailored for developers, startups, and enterprises that want to leverage the strengths of various LLMs without the complexities of managing individual contracts and subscriptions. With LLMWise, developers can focus on building innovative solutions while benefiting from the versatility and reliability of the best AI models available.

Frequently Asked Questions

Ironback FAQ

How does Ironback integrate into my existing systems?

Ironback's AI operations specialist is trained to understand your processes and systems. They integrate seamlessly, utilizing existing tools while enhancing their functionality through automation and AI technology.

What is the typical timeframe to see results with Ironback?

Most companies start noticing significant improvements within 90 days of integrating Ironback's services. This timeframe includes training and adaptation to your specific business needs.

What types of companies can benefit from using Ironback?

Ironback is designed for service companies across various industries, including construction, HVAC, plumbing, and more. If your business relies on efficient operations and customer service, Ironback can provide substantial value.

Is there a commitment required to start with Ironback?

You can start with a free AI operations audit or an introductory call. There are no commitments required to explore how Ironback can optimize your operations and drive savings.

LLMWise FAQ

What types of models does LLMWise support?

LLMWise supports a wide range of models from major providers including OpenAI, Anthropic, Google, Meta, xAI, and DeepSeek. It currently offers access to over 62 models, allowing users to choose the best fit for their specific tasks.

How does the pricing structure work?

LLMWise operates on a pay-per-use model with no subscription fees. Users can start with 20 free credits, and they only pay for the credits they consume, making it cost-effective and flexible for varying usage levels.

Can I use my existing API keys with LLMWise?

Yes, LLMWise offers a Bring Your Own Key (BYOK) feature. Users can integrate their existing API keys to access models at provider prices or choose to pay per use with LLMWise credits, ensuring they have the flexibility to manage costs effectively.

What happens if a model provider experiences downtime?

LLMWise has a built-in circuit-breaker failover mechanism that automatically reroutes requests to backup models when a primary model provider goes down. This ensures that your applications remain operational without interruption, maintaining high availability.

Alternatives

Ironback Alternatives

Ironback is an innovative solution that leverages AI operations specialists to enhance the efficiency of service companies. By embedding a dedicated AI expert, Ironback focuses on optimizing critical functions such as call handling, estimating, scheduling, and compliance. This approach helps businesses realize substantial cost savings and operational improvements. Users often seek alternatives to Ironback due to varying needs in pricing, features, or platform compatibility. As businesses grow and evolve, their operational requirements may shift, prompting the search for solutions that better align with their specific circumstances. When evaluating alternatives, it's crucial to consider factors such as scalability, ease of integration, and the range of services offered to ensure that the new solution meets your business's unique demands.

LLMWise Alternatives

LLMWise is a cutting-edge API designed to streamline access to various large language models (LLMs) such as GPT, Claude, and Gemini, among others. It falls under the category of AI Assistants, providing developers with a unified solution to leverage the best AI capabilities for diverse tasks without the hassle of managing multiple providers. Users often seek alternatives to LLMWise for several reasons, including pricing considerations, specific feature sets, or unique platform requirements. When choosing an alternative, it's essential to look for factors such as model performance, ease of integration, flexibility in payment structures, and the ability to test and optimize the models for your particular use case.