Agenta vs Fallom

Side-by-side comparison to help you choose the right product.

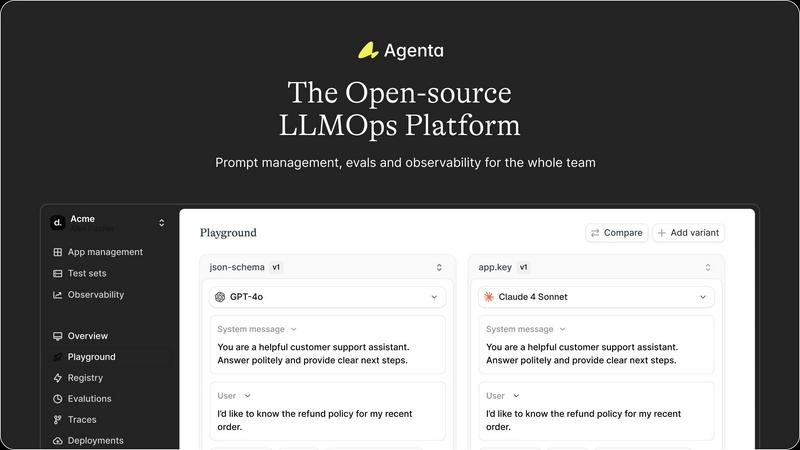

Agenta is an open-source LLMOps platform that centralizes prompt management, evaluation, and observability for AI teams.

Last updated: March 1, 2026

Fallom offers real-time observability for LLMs, enabling effortless tracking, debugging, and cost management for AI.

Last updated: February 28, 2026

Visual Comparison

Agenta

Fallom

Feature Comparison

Agenta

Centralized Workspace

Agenta provides a unified platform to store all prompts, evaluations, and traces, eliminating the fragmentation of tools. This centralization fosters collaboration and ensures that everyone on the team has access to the same information, enhancing communication and efficiency.

Unified Playground

The platform features a comprehensive playground where teams can experiment and iterate on prompts side-by-side. With complete version history, users can track changes and compare different models, making it easier to identify the best-performing solutions without vendor lock-in.

Automated Evaluation

Agenta offers systematic automated evaluations that allow teams to run experiments, track results, and validate every change made. By integrating any evaluator, whether built-in or custom, it replaces guesswork with data-driven insights, ensuring informed decision-making.

Observability Tools

The observability features of Agenta enable teams to trace every request, pinpoint failure points, and annotate traces collaboratively. This functionality transforms feedback into actionable insights, allowing for quick debugging and continuous improvement of AI systems.

Fallom

Real-Time Observability

Fallom delivers real-time observability for AI agents, allowing teams to track tool calls, analyze timing, and debug issues confidently. With a live dashboard, organizations can monitor every LLM call and gain insights into performance metrics, ensuring optimal operation of AI systems.

Cost Attribution

With Fallom, organizations can track spending per model, user, and team, providing full cost transparency for budgeting and chargeback purposes. This feature allows businesses to optimize their AI expenditures and make informed decisions about resource allocation.

Compliance and Audit Trails

Fallom ensures regulatory compliance with complete audit trails that support requirements such as the EU AI Act, SOC 2, and GDPR. Organizations can maintain detailed records of input/output logging, model versioning, and user consent tracking, ensuring they are prepared for audits and regulatory scrutiny.

Timing Waterfall Analysis

Fallom's timing waterfall feature allows teams to debug latency issues in complex workflows. By visualizing the timing of each step in multi-step agent processes, users can identify bottlenecks and improve the efficiency of their LLM interactions.

Use Cases

Agenta

Collaborative LLM Development

Agenta is ideal for teams working on LLM applications who need a structured way to collaborate. By centralizing prompt management and evaluation, teams can work together effectively, reducing miscommunication and enhancing productivity.

Iterative Prompt Testing

Teams can utilize Agenta to rapidly iterate on prompts in a controlled environment. With the unified playground, developers can test multiple variations and quickly identify which prompts yield the best results, accelerating the development cycle.

Performance Monitoring

Agenta allows teams to monitor production systems in real-time, helping to detect regressions and performance issues as they arise. This proactive monitoring ensures that any problems are addressed promptly, maintaining the quality of AI applications.

User Feedback Integration

By utilizing Agenta's trace annotation features, teams can gather valuable user feedback directly within the platform. This integration helps teams refine their models based on real-world usage, enhancing the overall effectiveness of their LLM applications.

Fallom

AI Workflow Optimization

Teams can utilize Fallom to optimize AI workflows by analyzing real-time performance data and identifying inefficiencies. This ensures that organizations are leveraging their LLM capabilities to the fullest and enhancing productivity.

Budget Management

With detailed cost attribution, finance teams can utilize Fallom to manage budgets effectively. This visibility allows organizations to control costs associated with AI model usage and to allocate resources based on actual consumption.

Compliance Readiness

Organizations operating in regulated industries can use Fallom to ensure compliance with various regulations. Its comprehensive audit trails and privacy controls help businesses navigate the complexities of regulatory landscapes confidently.

Performance Testing and Debugging

Development teams can leverage Fallom to run performance tests on LLM outputs, catching regressions before they reach production. The ability to monitor and evaluate model performance in real time enhances product quality and user satisfaction.

Overview

About Agenta

Agenta is an innovative open-source LLMOps platform crafted to revolutionize the way AI teams build and deploy reliable large language model (LLM) applications. Designed specifically for developers and subject matter experts, Agenta streamlines collaboration across teams, addressing the chaos often associated with LLM projects. It centralizes the development process by integrating prompt management, evaluation, and observability into a single platform. The unpredictable nature of LLMs can scatter workflows and create silos among teams. Agenta breaks down these barriers, allowing for efficient experimentation with prompts, comprehensive evaluations, and effective debugging of production issues. By transforming scattered workflows into structured processes, Agenta enables teams to enhance performance, reduce guesswork in debugging, and accelerate the delivery of reliable AI applications. With Agenta, every team member can contribute effectively, ensuring best practices are followed throughout the LLM development lifecycle.

About Fallom

Fallom is a revolutionary AI-native observability platform meticulously crafted to cater to the needs of organizations leveraging large language model (LLM) and agent workloads. It empowers teams with unparalleled visibility into every LLM interaction in production, ensuring comprehensive end-to-end tracing that encompasses prompts, outputs, tool calls, tokens, latency, and per-call costs. Designed for teams aiming to optimize their AI operations, Fallom provides rich user, session, and customer-level context alongside detailed timing waterfalls for intricate, multi-step agents. With robust enterprise-grade compliance features, including meticulous audit trails for logging, model versioning, and consent tracking, Fallom emerges as an essential tool for businesses navigating complex regulatory landscapes. Its OpenTelemetry-native SDK allows teams to rapidly instrument their applications, enabling real-time monitoring of usage, confident debugging of issues, and efficient cost attribution across various models, users, and teams—achieved in mere minutes.

Frequently Asked Questions

Agenta FAQ

What is LLMOps?

LLMOps refers to a set of practices and tools designed to optimize the development and deployment of large language models (LLMs). Agenta embodies LLMOps principles by providing a structured platform for collaboration, experimentation, and observability.

How does Agenta improve collaboration among team members?

Agenta centralizes workflows by integrating prompt management, evaluation, and observability into a single platform. This eliminates silos, allowing developers, product managers, and domain experts to work together more efficiently.

Can Agenta integrate with existing tools?

Yes, Agenta is designed to seamlessly integrate with various frameworks and models, including LangChain and OpenAI. This flexibility allows teams to incorporate Agenta into their existing tech stack without disruption.

Is Agenta suitable for both developers and non-technical users?

Absolutely. Agenta's user interface is designed to empower domain experts and product managers to run evaluations and experiment with prompts without needing to write code. This inclusivity enhances collaboration across diverse team members.

Fallom FAQ

What is Fallom?

Fallom is an AI-native observability platform designed for large language model and agent workloads, offering comprehensive insights into LLM interactions, ensuring compliance and optimizing AI operations.

How does Fallom improve LLM performance?

Fallom enhances LLM performance by providing real-time observability, enabling teams to analyze tool calls, latency, and costs. This allows organizations to identify inefficiencies and optimize their AI processes effectively.

Is Fallom suitable for regulated industries?

Yes, Fallom is built for compliance and offers robust audit trails, privacy controls, and security features designed to meet the needs of regulated industries, ensuring adherence to regulatory requirements.

How quickly can I start using Fallom?

Fallom is designed for rapid deployment with its OpenTelemetry-native SDK, allowing teams to set up and start tracing their LLM interactions in under five minutes. This quick setup minimizes disruption and accelerates insights.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform designed to streamline the development of large language model applications. By empowering AI teams to manage prompt experiments, evaluations, and debugging processes, Agenta centralizes workflows that can otherwise become disorganized and inefficient. As users seek alternatives, they often do so for various reasons, including pricing, feature sets, and specific platform requirements that may not be fully met by Agenta. When searching for an alternative, it's crucial to consider factors such as ease of use, integration capabilities, and the specific needs of your team. Look for platforms that offer robust collaboration tools, automated evaluation systems, and comprehensive observability features to ensure a seamless transition and continued success in LLM development.

Fallom Alternatives

Fallom is a state-of-the-art observability platform tailored for large language models (LLMs) and AI agents, providing organizations with the capability to monitor, track, and optimize their AI operations in real-time. Users often seek alternatives to Fallom for a variety of reasons, including pricing considerations, specific feature requirements, or compatibility with existing platforms. When exploring alternatives, it's essential to look for features that align with your organization's needs, such as comprehensive tracking, cost management, compliance capabilities, and ease of integration into your current systems.