Fallom

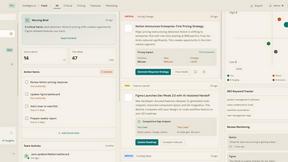

Fallom offers real-time observability for LLMs, enabling effortless tracking, debugging, and cost management for AI.

Visit

About Fallom

Fallom is a revolutionary AI-native observability platform meticulously crafted to cater to the needs of organizations leveraging large language model (LLM) and agent workloads. It empowers teams with unparalleled visibility into every LLM interaction in production, ensuring comprehensive end-to-end tracing that encompasses prompts, outputs, tool calls, tokens, latency, and per-call costs. Designed for teams aiming to optimize their AI operations, Fallom provides rich user, session, and customer-level context alongside detailed timing waterfalls for intricate, multi-step agents. With robust enterprise-grade compliance features, including meticulous audit trails for logging, model versioning, and consent tracking, Fallom emerges as an essential tool for businesses navigating complex regulatory landscapes. Its OpenTelemetry-native SDK allows teams to rapidly instrument their applications, enabling real-time monitoring of usage, confident debugging of issues, and efficient cost attribution across various models, users, and teams—achieved in mere minutes.

Features of Fallom

Real-Time Observability

Fallom delivers real-time observability for AI agents, allowing teams to track tool calls, analyze timing, and debug issues confidently. With a live dashboard, organizations can monitor every LLM call and gain insights into performance metrics, ensuring optimal operation of AI systems.

Cost Attribution

With Fallom, organizations can track spending per model, user, and team, providing full cost transparency for budgeting and chargeback purposes. This feature allows businesses to optimize their AI expenditures and make informed decisions about resource allocation.

Compliance and Audit Trails

Fallom ensures regulatory compliance with complete audit trails that support requirements such as the EU AI Act, SOC 2, and GDPR. Organizations can maintain detailed records of input/output logging, model versioning, and user consent tracking, ensuring they are prepared for audits and regulatory scrutiny.

Timing Waterfall Analysis

Fallom's timing waterfall feature allows teams to debug latency issues in complex workflows. By visualizing the timing of each step in multi-step agent processes, users can identify bottlenecks and improve the efficiency of their LLM interactions.

Use Cases of Fallom

AI Workflow Optimization

Teams can utilize Fallom to optimize AI workflows by analyzing real-time performance data and identifying inefficiencies. This ensures that organizations are leveraging their LLM capabilities to the fullest and enhancing productivity.

Budget Management

With detailed cost attribution, finance teams can utilize Fallom to manage budgets effectively. This visibility allows organizations to control costs associated with AI model usage and to allocate resources based on actual consumption.

Compliance Readiness

Organizations operating in regulated industries can use Fallom to ensure compliance with various regulations. Its comprehensive audit trails and privacy controls help businesses navigate the complexities of regulatory landscapes confidently.

Performance Testing and Debugging

Development teams can leverage Fallom to run performance tests on LLM outputs, catching regressions before they reach production. The ability to monitor and evaluate model performance in real time enhances product quality and user satisfaction.

Frequently Asked Questions

What is Fallom?

Fallom is an AI-native observability platform designed for large language model and agent workloads, offering comprehensive insights into LLM interactions, ensuring compliance and optimizing AI operations.

How does Fallom improve LLM performance?

Fallom enhances LLM performance by providing real-time observability, enabling teams to analyze tool calls, latency, and costs. This allows organizations to identify inefficiencies and optimize their AI processes effectively.

Is Fallom suitable for regulated industries?

Yes, Fallom is built for compliance and offers robust audit trails, privacy controls, and security features designed to meet the needs of regulated industries, ensuring adherence to regulatory requirements.

How quickly can I start using Fallom?

Fallom is designed for rapid deployment with its OpenTelemetry-native SDK, allowing teams to set up and start tracing their LLM interactions in under five minutes. This quick setup minimizes disruption and accelerates insights.

Explore more in this category:

Top Alternatives to Fallom

Talk to your SEO & Analytics data - it finally talks back

Stop guessing your positioning. Get data-backed competitive intelligence and market signals to drive your B2B SaaS GTM strategy.

OpenMark AI benchmarks over 100 LLMs for your specific tasks, delivering instant insights on cost, speed, quality, and stability.

Fusedash transforms raw data into interactive dashboards and charts, empowering teams to act on insights instantly.

qtrl.ai empowers QA teams to scale testing with AI agents while maintaining full control and governance for optimal.

Echoloc uncovers buyer intent in job posts, empowering sales teams to target accounts ready to make purchases.